Strengthening Patient Trust

March 12, 2026

Team Lighthouse Guild Raises Funds for Adaptive Athletics at TD Five Boro Bike Tour, with Sponsorship from STIG

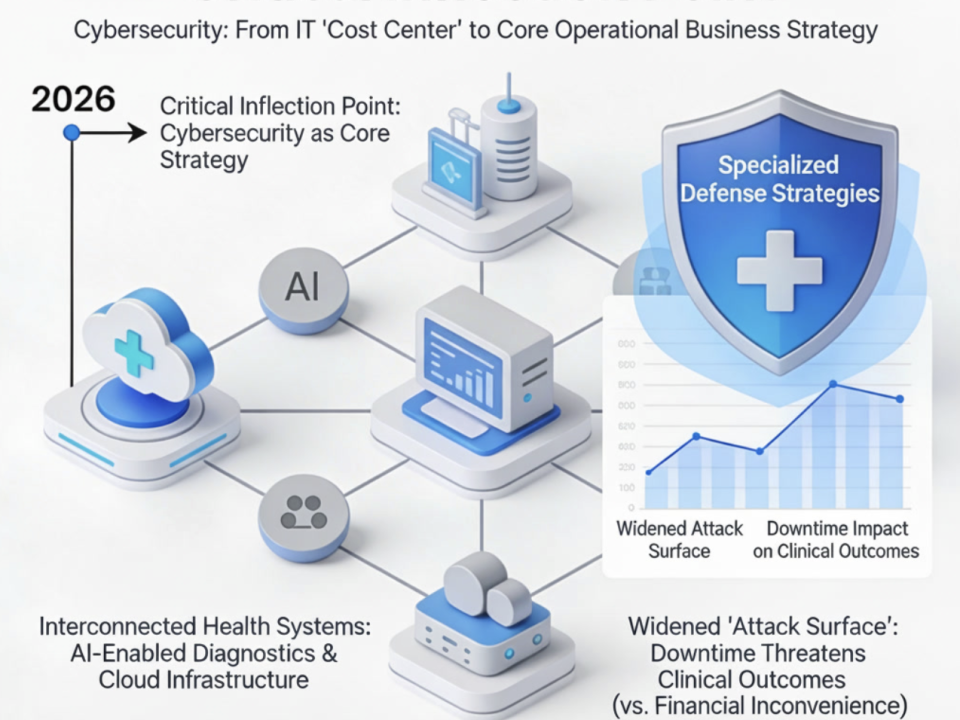

May 5, 2026A recent analysis from HIMSS26 highlighted a critical tension currently felt across the industry: artificial intelligence is advancing at a clinical and operational pace that far outstrips the current regulatory framework. As federal agencies play catch-up and states begin to introduce a patchwork of disparate legislation, healthcare leaders are left navigating a void of undefined oversight, accountability, and liability.

At STIG, we view this not as a signal to halt innovation, but as a mandate for heightened operational discipline. AI adoption now requires a level of governance, security, and strategic oversight that exceeds the traditional “vendor management” models of the past.

Moving Beyond Theoretical Risk

Healthcare is a high-consequence environment where decisions directly impact patient safety, clinical outcomes, and financial integrity. When AI enters this ecosystem, risks manifest rapidly. Even “assistive” AI requires deep transparency into data provenance, algorithmic logic, and long-term performance monitoring.

This urgency is compounded by the rise of agentic AI. As highlighted at HIMSS, traditional regulatory models struggle to address systems capable of autonomous behavior or iterative evolution post-deployment. For healthcare executives, this means AI governance cannot be a static policy—it must be integrated into the core operating model.

Five Strategic Priorities for AI Governance

To navigate this transition, STIG recommends that healthcare organizations focus on five foundational pillars:

-

Establish Governance Prior to Deployment: Every AI use case must have a designated business owner and a documented risk profile (administrative, operational, or clinical). Governance should be proportional to the risk of the specific application.

-

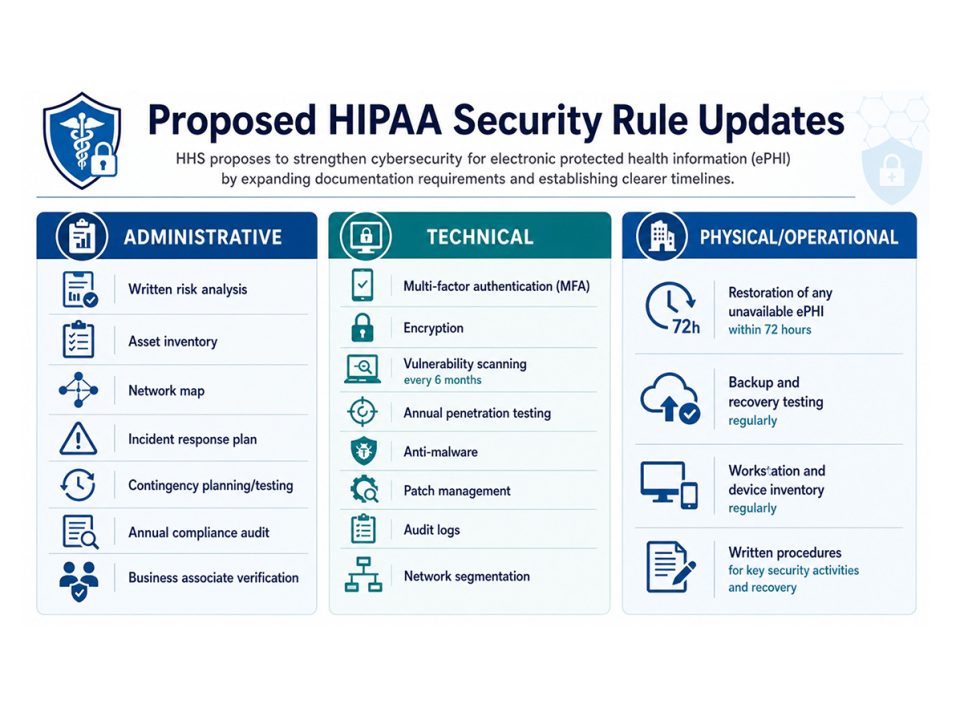

Embed Security and Privacy in the Lifecycle: Organizations must move beyond basic checklists to understand third-party data flows, PHI interactions, and the expanded attack surface introduced by LLMs and proprietary models.

-

Mandate Meaningful Human Oversight: “Human-in-the-loop” is more than a buzzword; it is a safety requirement. Humans must remain responsible for validating outputs, managing exceptions, and making the final consequential decisions in clinical or financial workflows.

-

Implement Post-Implementation Monitoring: AI performance can degrade due to “model drift” or changing data patterns. Oversight cannot end at the point of purchase; it requires ongoing monitoring of accuracy, security events, and workflow impact.

-

Build for a Patchwork Regulatory Landscape: With federal and state regulations in a state of flux, organizations cannot afford to wait for “perfect” clarity. The goal is a defensible, flexible governance model that maintains compliance across multiple jurisdictions.

Critical Questions for Healthcare Leadership

As organizations move from pilot programs to enterprise-wide adoption, leadership must address these practical realities:

-

Accountability: Who is ultimately responsible when a tool produces a flawed or biased result?

-

Data Integrity: Which specific tools are authorized to access PHI or operationally sensitive data?

-

Resilience: How will the organization respond to a patient safety or business continuity issue triggered by an AI-enabled process?

The STIG Perspective

The organizations that successfully harness AI will not necessarily be the ones that move the fastest, but those that build the most resilient frameworks for innovation. At STIG, we specialize in helping healthcare organizations align rapid technology adoption with the rigorous demands of regulated environments.

Healthcare AI is moving quickly. The appropriate response is neither fear nor blind acceleration—it is disciplined progress.